Dear Readers,

In this Update for Product | Strategy | Innovation I will discuss a big data analytics company that is gaining traction transitioning from primarily long-term government contracts to an increasing number of corporate enterprise deployments across multiple industries.

This incumbent is known as Palantir Technologies. There are only 3 companies who have received Impact Level 6 clearance to offer cloud services to the Pentagon for sensitive classified information. These 3 companies are Amazon Web Services, Microsoft and Palantir Technologies.

The inspiration for Palantir was PayPal’s fraud recognition system. Following PayPal’s acquisition by EBay in 2002, PayPal co-founder Peter Thiel and several other PayPal alumni co-founded Palantir in 2003 to build systems to reduce terrorist attacks following 9/11.

Gotham is Palantir’s government offering built out of long-standing collaborations across intelligence communities, defense departments, homeland security, military operations, and health services.

“Skate to where the puck is going, not where it has been.” - Wayne Gretzky

The experience Palantir gained designing and deploying systems for government agencies for almost 2 decades has informed building systems integrated across business functions and operations in the modern enterprise. Foundry is Palantir’s business offering using ontology to power the design and software architecture.

AirBus partnered with Palantir to build Skywise as a data platform service using Foundry to help its airline customers operate over 10,000 aircraft through certified partners. Skywise features include aircraft health monitoring, predictive maintenance when abnormal behavior is detected and improved operational reliability. AirBus is rapidly scaling Skywise services, so the return on investment for customers must be meeting key assumptions.

Artificial Intelligence (AI) and large language models (LLMs) have been a core feature for the systems Palantir routinely deploys. Palantir introduced AIP earlier this year in response to the demand for generative AI technology that meets the requirements for their government and corporate enterprise customers. These organizations do not want to share proprietary data with large language models where proprietary data are commingled with other data.

Palantir allows deployments to include AI guardrails to control permissions for automation and where to include a human-in-the-loop. Palantir is working with early adopters to deploy AIP at basically cost or even a loss to build business cases for sustained deployments where Palantir is rewarded for operational gains and savings.

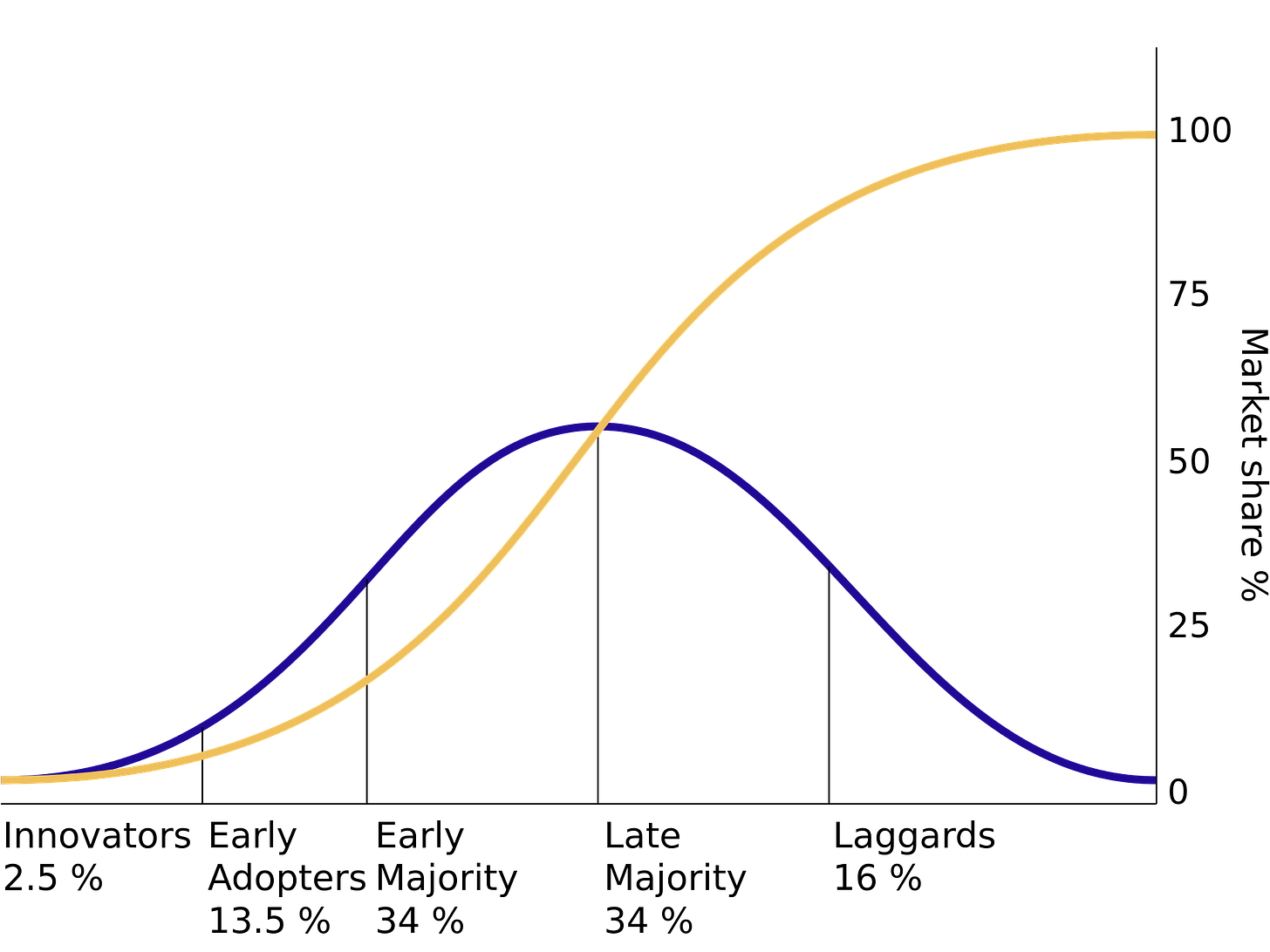

To fully understand the opportunity AI offers to tech companies like Palantir and their customers, we must first anticipate the diffusion of innovation that is sparked when a transformative technology like ChatGPT is introduced to the market and scales rapidly with easy access for consumers.

The Diffusion of Innovation Theory was proposed by E.M. Rogers in 1962 and differentiates adoption into 5 phases defined by the characteristics of the adopters.

The hype and interest surrounding ChatGPT helped radiate demand worldwide very quickly. This hype combined with easy access and a simple user interface to try ChatGPT with text prompts enabled ChatGPT to reach 1 million unique users within 5 days compared to 5 months for Spotify. 100 million unique users for ChatGPT were reached within 2 months.

However, ChatGPT is just a seed for the innovation needed to augment human intelligence at scale. Waves of innovation over the next decade are needed. But what does that look like and who are the participants? Personal computing over the 1980s and 1990s may offer a reasonable proxy for what can happen regarding artificial intelligence and machine learning.

Personal Computing as a proxy for Machine Learning innovation

I purchased my first personal computer (Apple IIe) as a college student to work on school projects whenever I needed to do so. I also ran a consulting business using the same personal computer based on software I had developed a few years earlier on an IBM System 23.

Millions of consumers and small businesses were also fueling personal computing innovation based on the rapid growth in demand for these products and related services.

Intel was founded in 1968, but its 80286 16-bit microprocessor introduced in 1982 changed the game for x86-based CPUs.

Microsoft was founded in 1975 to offer the popular mainframe programming language BASIC on the Altair personal computer, but later licensed an early version of DOS from Seattle Computer Products to work on an operating system to support the Intel 8088 CPU. DOS was purchased by Microsoft in 1981 and renamed MS-DOS.

IBM introduced the PC/AT based on the Intel 80286 CPU in 1984. The IBM PC/AT also used the MS-DOS operating system. IBM accelerated adoption of MS-DOS but the license was not exclusive.

3COM was founded in 1979 out of Xerox PARC to commercialize the Ethernet protocol and related hardware to create local area networks.

Dell Computer was also founded in 1984. Michael Dell disrupted the computer dealer business model, by selling PCs direct to consumers and small businesses. Dell also used Intel CPUs and MS-DOS until Microsoft Windows eventually took over.

Cisco Systems was founded in 1984 and introduced the Cisco 2500 Series of routers in 1993. This allowed companies to create networks of personal computers to equip staff with what they needed to do their work. This disrupted the mainframe computer business model.

This was a quick journey back in time, but the scenario has been repeated multiple times with personal, cloud and mobile computing. A step-change technology advance in semiconductor technology can seed a chain of innovation in downstream technologies.

STEP-CHANGE with advance in semiconductor technology (starting in 1984 for PCs)

Intel: x86-based CPUs

IBM: PC/AT innovator of x86 CPU adoption

Hyper-Scalers BUILD PLATFORM INFRASTRUCTURE

Microsoft: MS-DOS/Windows for x86 CPUs

Compaq (acquired by HP): PCs using x86 CPU, MS-DOS/Windows

Dell: PCs using x86 CPU, MS-DOS/Windows & direct-to-consumer business model

3COM: Network Interface Controllers and Ethernet series products for Local Area Networks

EXPAND LONG-TERM VALUE-CREATION on top of the new Platform Infrastructure

Cisco Systems: routers and computer networking equipment across an entire organization

Microsoft Office: suite of business productivity software

HP: personal computers, printers, monitors and accesories

Adobe: graphics and illustration software, document management

Oracle: database and enterprise software

Autodesk: architecture, engineering and construction software; product design, manufacturing and visual effects software

Intuit: financial and business management software; payroll and tax solutions

Electronic Arts: digital interative entertainment and gaming software

These waves of innovation can also load the early stages of adoption into the first wave where the Hyper-Scalers actively collaborate with semiconductor companies to define and advance the step-change technology. But the total addressable market expands significantly with progression through the waves of innovation when more value is captured with comprehensive shardware and software systems beyond the core semiconductor unit production scale.

Intel was the primary beneficiary in the 1980s as multiple PC manufacturers scaled production for computers based on Intel’s x86-family of CPUs. Innovators and early adopters drive the high-performance requirements for the x86 CPUs. The primary beneficiary is the individual user who gains from their own increase in performance by using a PC.

As PCs gain traction in the late 1980s and early 1990s, specific product features and business models consolidated the market to fewer PC manufacturers like Dell. Innovators and early adopters among large enterprises drive the requirements for auxiliary components to connect local computers into networks and add printers and enhanced graphics capabilities. The primary beneficiaries are teams who gain from the collective increase in performance using this emerging platform infrastructure.

Cisco Systems enables corporate-wide networks to connect every worker. Corporate-wide deployments for Microsoft Office and vertical solutions from Adobe, Oracle, Salesforce and other enterprise software companies tackle the biggest problems companies face across major business functions. The primary beneficiaries are companies made up of multiple teams of individuals.

Along these waves of innovation, Intel, Microsoft, Dell, and Cisco Systems were huge beneficiaries as adoption scaled and PC deployments expanded from individual consumers and small businesses to teams within larger enterprises and then to corporate-wide deployments.

Multiple waves of Innovation to realize full potential of Artificial Intelligence

Nvidia has gotten a lot of attention over the last 10 months since the introduction of ChatGPT. Nvidia AI-enabling GPUs are the leading semiconductor technology to train and deploy LLMs. Nvidia has been a leader with its A100 & H100 GPUs as LLMs have gained popularity. AMD, Intel and Samsung would also like to participate in the semiconductor opportunities AI provides. Taiwan Semiconductor and Samsung also benefit as foundries that fabricate these semiconductors.

STEP-CHANGE with advances in semiconductor technology (starting around 2020)

Nvidia launched Ampere A100 GPU in 2020 & Hopper H100 GPU in 2022

AMD MI300X launches in late 2023 to rival Nvidia H100

Tesla Dojo D1 microprocessor launched internally in 2023 to train LLMs for computer vision to enable full-self driving (FSD)

Intel is ramping up chip design and fabrication for AI applications to rival Nvidia and AMD

Taiwan Semiconductor (TSMC) leads semiconductor fabrication for AI applications

Samsung is advancing its semiconductor fabrication technology and processes to rival TSMC

Note: The step-change in semiconductor technology realized in 2020 with the Nvidia Ampere A100 GPU was significant. But this step-change in AI hardware was further enhanced by the step-change in AI software that resulted from the transformer model published earlier in 2017.

These fundamental semiconductor building blocks must be organized into a higher tier of hardware. For AI and High-Performance Computing (HPC) the platform infrastructure becomes a cloud cluster with 1000s of GPUs. A batch of work to train LLMs using large datasets can be done remotely in the cloud with available resources. This will likely become more specialized over time and co-located where renewable energy drives down a major input cost for HPC.

So for AI innovation to thrive beyond the core semiconductors, we need a range of cloud service providers to hyper-scale HPC capacity and offer these services over time at minimal cost to scale and take market share. This is a land grab over the next decade to build out HPC platform infrastructure using clusters of the latest semiconductor designs to advance AI technology.

Hyper-Scalers BUILD PLATFORM INFRASTRUCTURE

Microsoft Azure

Azure continues to expand HPC capacity across private, public and hybrid cloud services

Microsoft leverages strategic partnership with OpenAI to advance AI technology

Azure and OpenAI Services for internal and external partners

Microsoft Office incorporates OpenAI into all its products

Azure OpenAI Service for external partners

KPMG

Accenture

Microstrategy

META

Amazon Web Services (AWS)

AWS continues to expand HPC capacity across private, public and hybrid cloud services

AWS Generative AI Innovation Center for external partners

Ryanair

Twilio

Lonely Planet

AWS Generative AI Accelerator (for startups)

Strategic Partners to build out HPC capabilities

Accenture

Hugging Face

Stable Diffusion

X (formerly known as Twitter)

Tesla

Google Cloud Platform (GCP)

GCP continues to expand HPC capacity across private, public and hybrid cloud services

Google DeepMind absorbed Google Brain in 2023 to consolidate AI thought-leadership under one entity within Google

GCP AI external partnerships

SAP

Salesforce

Box

Accenture

Deloitte

KPMG

Cognizant

Mayo Clinic

Cloudflare R2 AI infrastructure for generative AI companies

HUT 8 HPC data centers and Bitcoin mining operations

Meta Platforms reprioritizes AI initiatives across research, infrastructure and products

Meta open-sources Llama 2 LLM for AI research and commercial use for free

Meta builds out its Research SuperCluster (currently at 5 exaflops)

Meta continues to build out AI tools like PyTorch

Tesla advances full-AI stack for HPC training and in-vehicle edge computing for full-self driving (FSD)

Hardware v4 FSD semiconductor chip, in-vehicle FSD computer and sensor array of cameras for 360 degree computer vision

Tesla Cloud to ingest edge cases pushed by Tesla EV fleet to the cloud to document exceptions when beta drivers intervene over FSD

Tesla Dojo supercluster to automate labeling edge cases and training FSD LLMs; release candidates for new FSD LLMs are alpha tested internally within Tesla; successful candidates are released for additional testing to customers who have purchased FSD for its beta program

Tesla Dojo supercluster is expected to reach 100 exaflops in 2024

Tesla Dojo D1 chip is the primary building block for the Dojo supercluster along with Nvidia H100 GPU-based clusters

As the AI platform infrastructure builds out HPC capacity and capabilities, an efficient frontier is realized by the aggregation of all these resources. The cost to add more capacity at a specific cost for a specific level of performance eventually does not return enough value. Until this efficient frontier is reached, the priority is to just build out more computing capacity like manufacturing PCs in the 1980s and 1990s in the rush to disrupt more expensive forms of computing like mainframes with a terminal for each user.

Once the efficient frontier is reached, the next horizon and wave of innovation becomes taking that scaled platform infrastructure to ramp up extracting more long-term value-creation from these resources. Start-up ventures who participate in the build out of software platform infrastructure may be able to cross over into this next horizon to survive. Otherwise, they likely get acquired by the incumbents trying to do the same.

The incumbent enterprise software providers are likely a key beneficiary of this transition into long-term value-creation. They have already generated vast proprietary data for enterprise customers who operate their businesses using these systems. Vertically integrating AI into their enterprise solutions can scale as the platform infrastructure capacity and capabilities expand.

EXPAND LONG-TERM VALUE-CREATION on top of the new Platform Infrastructure

Tesla has significant value-creation to gain and leverages its own AI platform infrastructure to realize this value-creation

FSD semiconductors, hardware and software-as-a-service and robotaxi business model

FSD repurposed to realize fleets of humanoid robots

Tesla Energy realizes Virtual Power Plants with stationary batteries and AI services deployed by utility companies

Palantir disintermediates AI platform infrastructure providers on top of large government and enterprise system deployments

Palantir solutions are preferred and deployed across large enterprise based on trust and control of proprietary data

AI platform infrastructure provider solutions become vertically-integrated, but substitutable into Palantir solutions

Amazon vertically integrates AI across all its products and services beyond AWS

Amazon Prime

Amazon Marketplace

Apple and Disney vertically integrate AI across all their products and services

Meta Platforms vertically integrates AI across all its products and services

X and xAI vertically integrate AI across all their products and services

Microsoft vertically integrates AI across all its products and services on top of large enterprise system deployments

Oracle vertically integrates AI across all its products and services on top of large enterprise system deployments

SAP vertically integrates AI across all its products and services on top of large enterprise system deployments

Salesforce vertically integrates AI across all its products and services on top of large enterprise system deployments

Microstrategy vertically integrates AI across all its products and services for business intelligence and blockchain solutions on top of large enterprise system deployments

Palantir ontology- and AI-powered operations support every decision

To adequately cover Palantir would require a more comprehensive review than what I will cover in this Update. Palantir provides these details through their own communications. I may also cover more details in future Updates.

Palantir’s main products include Gotham, Foundry, Apollo and a new addition this year called AIP (or AI Platform).

Palantir doesn’t have to participate directly in the rush to build out semiconductors to realize the benefits AI provides. Palantir doesn’t even have to participate directly in the build out of the platform infrastructure to add HPC capacity and capabilities.

Palantir is skating to where the puck will be in the future when the efficient frontier for high-performance computing is reached to cost-effectively exploit all the capabilities AI provides. This is where the most value can be created for its large government and corporate customers. And Palantir can participate and monetize this value-creation.

Ontology-Powered Operations

One can define ontology as what exists within a system and the relationships between all its entities. This helps understand business operations if inputs and outputs are measured and tracked from raw materials and component parts in the supply chain through manufacturing, inventory, distribution, and sales. Palantir maps the ontology using 3 layers. These are summarized as:

Semantic Layer - This is the base layer that maps the business into objects, entities and relationships.

Kinetic Layer - Real-time monitoring and process mining informs AI-driven actions and automation.

Dynamic Layer - AI-guided decisions, simulations and learning.

I would imagine Palantir deployments are staged to fully understand and map the ontology of an organization. But each deployment builds on the knowledgebase to improve this process.

AI-Powered Operations

Ontology provides the multi-layer infrastructure and proprietary data within an organization over time to train LLMs. This enables AI-powered decision making using data and insights based on the operations of the organization. Palantir also works with organizations to control permissions for the data and models to protect proprietary data.

But the real benefit for Palantir and its customers has already been highlighted in this Update. What if the step-change in technology is a new discovery like the transformer model that inspired ChatGPT. That will have an impact in the research labs, but once the discovery and value-add are initially validated outside the company, Palantir can determine when and where to test the innovation through its deployed systems.

Palantir can conduct alpha and beta deployments for a potential step-change hardware and/or software technology to verify and validate the commercial assumptions around the value-add.

Palantir can leverage existing customers in specific industries where the ontology and platform infrastructure are already in place to vertically integrate the step-change technology into the platform infrastructure already in place. This allows the alpha and beta deployments to scale into limited launch deployments to scale up even more comprehensive validation of commercial assumptions.

Palantir can leverage insights gained from limited launches to build the strategy to expand the long-term value creation for its customers and specific to each industry.

So, the model I have described that has been repeated multiple times but requiring a decade or more can be accelerated into 2 to 3 years when the right step-change technology emerges. Until then Palantir can use their state-of-the-art technology to build out the platform infrastructure across hundreds of organization and many industries around the world. Palantir turned profitable in 2023 to fuel this growth.

So when the next significant, step-change, AI-related technology(ies) come to market, Palantir will be well positioned to vertically integrate and exploit the advantages the technology offers.

Augmenting human intelligence will be achieved in stages with improving performance for data-driven decisions over time. This is a marathon not a sprint. And the competition among companies in the business intelligence industry will be intense. And the journey will encounter controversy along the way.

Best,

Stephen

I’m long AMZN, HUT, MSTR, PLTR, and TSLA mentioned in this Update. Nothing in this Update is intended to serve as financial advice. Do your own research. The opinions and views expressed in this newsletter are those of the author. They do not purport to reflect the opinions, views or policies of any other organization, company or employer.