[U9] Tesla is all-in on AI at the edge

Tesla's autonomous navigation strategy challenges competitor's key assumption

This week in Product | Strategy | Innovation we will continue a series of updates on Elon Musk’s vision for a sustainable energy future. This update will focus on Autonomous Navigation enabled by artificial intelligence (AI) and edge computing. But Tesla’s AI roadmap is more about building a full technology stack for computer vision than autonomous vehicle navigation alone. And unlike other vehicle manufacturers, Tesla does not use LIDAR and is betting only on 360 degree Computer Vision with cameras. This makes more than vehicle navigation possible. So why computer vision and what makes up that technology stack?

Computer Vision is a leading use case for AI with deep learning

Computer Vision is one of the hottest areas of research & development because it touches so many disciplines from computer science to mathematics to physics to engineering and commercial applications with huge total addressable markets like facial recognition to classify images at Facebook, Google, Apple and Amazon. Autonomous vehicles, robots and drones all need computer vision to scale commercial viability. And these systems must be continuously trained with the exponential growth of images from real world use. Tesla leads autonomous navigation with an estimated 4 billion miles driven on autopilot with the latest Hardware 2 and Hardware 3 discussed below. Autopilot defines supervised distance and speed control, lane changing and hazard avoidance on the highway.

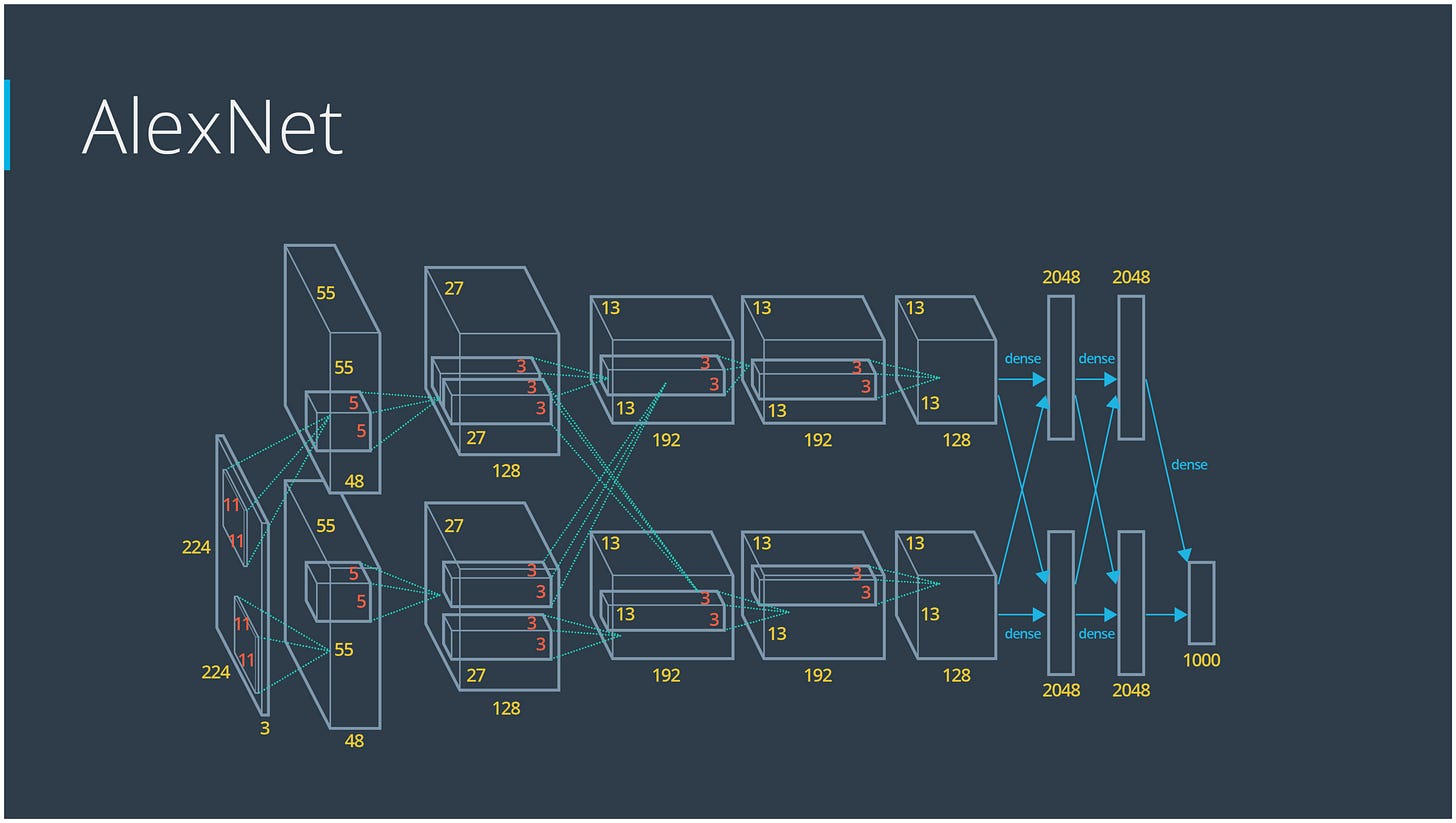

But what really advanced Computer Vision with these exponentially growing datasets is the image classification use case for deep learning with ImageNet. This is a database with about 1.2 million labelled images to train image classification models. The winner of the 1st ImageNet competition was Alex Krizhevsky in 2012. He did so with what is called a convolutional neural network (CNN) that consisted of 11 layers.

This advanced neural network model referred to as AlexNet was implemented at a time when computer hardware was making significant advances with 2 Nvidia GPU processors with over 1000 cores. The outcome was a system that could be trained in a week and winning the competition provided visibility for others to experiment with AlexNet. This led to uses by Facebook and Google to classify images on a much larger scale. This also enabled Tesla to take on Computer Vision to pursue autonomous vehicle navigation. Nvidia also saw the opportunity to advance GPU technology to support computer vision development.

Computer Vision at Tesla leverages a scaled up neural network (NN) model for autonomous navigation inspired by AlexNet and continued with PyTorch developed at Facebook as an open source machine learning library for computer vision and natural language processing. A 2-minute test drive in Antioch, CA with Tesla FSD Beta v8.2 [2021.4.11.1] is really worth understanding what Tesla is taking on with their AI strategy. Vehicles are only the first application!

To dive deeper into the overall Tesla AI system for Full Self Driving (FSD) on all roads, we need to highlight 3 key domains:

Tesla Dojo (supercomputer, automated image labelling to train AI models)

Objective: accelerate work of software engineers who currently label images

Disclaimer: this is the most speculative of the 3 domains and is still a prototype system working towards v1 this year, but Tesla is on the leading edge here

Supercomputer optimized to train neural networks with a custom chip-set Tesla is designing for computer vision vs. more generalized AI

Automate labelling images to continuously increase the dataset to train neural networks

Environment to run driving simulations

Prototype system already operational, but v1 expected mid-2021

Likely key topic for the anticipated Tesla AI Day in June/July

Future use expands to other Computer Vision use cases (robotics, drones, etc.)

Tesla Cloud (NN model trained with labelled images collected by Tesla vehicles

Cloud services to support Tesla vehicles, mobile app, energy services, etc.

Hosts inference NN model, raw & labelled “edge case” images from vehicle fleet

Controls Over-the-Air updates of latest released NN Model to Tesla fleet

FSD Beta v9.0 NN Model anticipated June 2021 to expand current “driver supervised” beta use with about 2,000 Tesla vehicles

Tesla Vehicle FSD hardware (image acquisition, edge computing, engineering controls)

Current Tesla vehicles with autopilot hardware 3.0 include 8 cameras for full 360 degree vision, dashboard camera, radar with about 550 ft range and 12 sonar sensors with about 26 ft range.

Tesla vehicles with autopilot hardware 2.0 & 2.5 can upgrade to 3.0 with the purchase of FSD (current ASP is $10,000 but is expected to increase with the release of v9.0)

Inference FSD computer (shown above) includes 2 custom Tesla FSD chips optimized for autonomous vehicle navigation and low power to enable computer vision in an electric vehicle. This Tesla FSD computer differentiates autopilot hardware 3.0 from prior versions with Nvidia chipsets. Tesla FSD computer 2

FSD Beta currently requires driver supervision with manual activation of autonomous navigation and easy disengagement by the driver to resume control of the vehicle

The 2 Tesla FSD chips mentioned in the FSD computer leverage the same ARM neural processor architecture found in Apple iPhones and iPad Pro for biometric facial recognition called Face ID. The low latency of neural networks available on a device itself enable capabilities that would not be feasibility if the neural network was only available on the cloud. This low latency architecture with on-device neural networks defines an emerging domain called edge computing. Tesla takes edge computing to the next level when human driver actions disagree with autopilot or FSD Beta to identify “edge cases”.

These edge cases are uploaded to the Tesla Cloud for analysis and labeling when included in the FSD image training dataset. As this training dataset grows and training improves the FSD neural network model, a new version can be released once the improvements are substantial enough to do so. The new FSD version release is then pushed out to the Tesla fleet for use by those who have purchased FSD. So edge computing accelerates the FSD improvements when coupled with the Tesla Cloud and even more so in the future if Tesla Dojo is realized to automate training.

The Tesla AI system was described in an 11 minute video in the fall of 2019 by Andrej Karpathy, Tesla Director of AI. The video describes the overall system, but does not highlight the latest technology due to all the developments over the last 18 months.

Unlike other autonomous vehicle strategies, Tesla does not use LIDAR. Tesla’s decision on LIDAR is based on Elon Musk’s use of LIDAR at SpaceX. Tesla opted to go without LIDAR based on a cost-benefit analysis. Current FSD Hardware 3.0 on Tesla vehicles includes radar and sonar. Tesla has also recently announced it is dropping sonar and radar in future FSD Hardware designs because if there is disagreement between the sensor data, 360 degree computer vision with cameras always over-rides the other data. Future FSD Hardware is expected to only include rapidly improving camera technology for 360 degree Computer Vision. This also allows Tesla to accelerate camera-only Computer Vision development for other use cases where radar and sonar would either be cost prohibitive or not feasible due to other constraints like size or weight for drones.

The U.S. National Highway Traffic Safety Administration defines level 5 self-driving cars: “The vehicle can do all the driving in all circumstances, [and] the human occupants are just passengers and need never be involved in driving.”

Tesla FSD will continue to improve with billions of supervised autopilot miles driven while also comparing crash data between autopilot and manual driving. The FSD Hardware is already believed to be feature complete for level 5 full autonomy. So regulatory approval is just waiting for the software, evidence and early adoption to converge. It looks like early level 4 adoption (geofenced full autonomy for specific routes) will happen first in the underground Vegas Loop where autonomous navigation is basically already solved. Drivers in these Tesla vehicles will likely be eliminated within 12 months of launch because of all the engineering controls in this robotaxi use case.

Florida has already approved full autonomy without a human driver on public roads on July 1, 2019 once vehicles achieve level 4 and 5 self-driving capability and safety.

This Vegas Loop Tesla robotaxi use case will greatly aid regulatory adoption when Orlando, Miami and other tourist destinations experience full autonomy in a Tesla vehicle. FOMO wins! Some cities will opt for tunnels, but Las Vegas will see the benefits of above ground level 5 autonomy as well. What if Tesla robotaxis piloted in the Vegas Loop could also bring more above ground tourists to Las Vegas from Los Angeles for a weekend? What if Disney World could bring more tourists to Epcot from Tampa and Miami? These business cases will drive early regulatory adoption on very targeted highways and jurisdictions. If the economy is doing well to drive the business case, I think regulatory approval could happen for these niche cases by 2025 if the crash data show a margin of safety. Human drivers are not perfect. Early adoption will continue to expand until the crash data become so convincing that wider regulatory approvals follow. Eventually steering wheels will be eliminated to prevent human drivers from crashing vehicles that are far safer with autonomous navigation.

Tesla could also fail to achieve approved full autonomy for many years

The march of 9s to achieve regulatory approval requires the long tail of deep learning to continue to improve Tesla computer vision to navigate vehicles safely and reduce crashes beyond human drivers. Some engineers have stated that deep learning has limitations and will prevent Tesla from reaching its ultimate goal of full autonomy.

Another risk for Tesla is their vehicles are easily identifiable on the road. Autonomous navigation accelerates the transition to sustainable energy with Transportation-as-a-Service in electric vehicles vs. vehicle ownership of an internal combustion energy vehicle. Every day that transition is delayed, legacy companies across many industries in combination earn around $2 billion dollars. If Tesla went through years of targeted short selling its stock, it is not hard to imagine Tesla vehicles being targeted to influence their crash data. Every Tesla crash also draws almost immediate media attention at a global, national and local level.

But Tesla also uses AI for other applications besides autonomous navigation. Tesla vehicles can detect rain patterns and automatically control windshield wipers with FSD Hardware. And Tesla uses AI for software in its energy business. Utility companies and eventually corporations use auto-bidder software with Tesla Megapack battery deployments to buy and sell energy based on weather, market pricing and expected demand. This software operates in the background without supervision. The value proposition of auto-bidder is significantly enhanced when the grid battery storage is coupled to wind and/or solar power generation to make selling energy more profitable. Early adopters win so this is a use case for AI accelerating the transition to sustainable energy.

Best,

Stephen

Nothing in this post is intended to serve as financial advice. Do your own research. I’m long TSLA.